AI is rewriting the Data Centre Playbook, but the Next Big Opportunity may be at The Edge

For years, the data centre industry grew steadily in the background: more cloud adoption, more video, more software, more storage. AI has changed that. Data centres are no longer just digital real estate; they are becoming a core part of national industrial strategy, energy planning, and competitive advantage. The International Energy Agency now expects global data-centre electricity consumption to roughly double by 2030 to about 945 TWh. That incredible rate of growth is mainly the result of the accelerating demand for AI.

This shift matters because AI does not simply add more of the same demand, it changes the physical profile of compute. Training large models concentrates huge amounts of power, cooling, networking, and capital into a small number of locations. Inference then spreads those models into daily use, potentially across billions of requests. The result is an industry that is simultaneously centralising and decentralising: ever larger campuses for frontier training and a growing case for smaller, more distributed facilities closer to users.

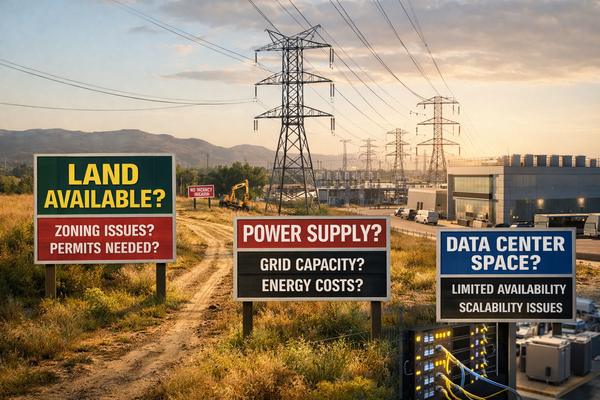

The growth story is real, but so are the bottlenecks

The headline story is often “AI needs more compute.” The harder reality is that scaling is increasingly constrained by everything around the chip: power availability, interconnection queues, transformers, switchgear, liquid-cooling infrastructure, workforce, permitting, and construction timelines. Uptime Institute’s 2025 survey describes an industry facing rising costs, worsening power constraints, staffing challenges, supply-chain delays, and mounting pressure from AI workloads.

The most important constraint may be time to power. Research on large-load growth in North America shows data centres accounted for nearly 80% of large-load requests submitted to utilities across the Western interconnection region in 2024. The same work notes that new large-load facilities can be built in roughly 1.5 to 2 years, new generation often takes 3 to 5 years, and new transmission can take much longer. At the same time, transformer fleets are aging, prices have risen sharply, and lead times for key electrical equipment have stretched materially since 2022. In other words, the limiting factor is often not the willingness to invest; it is the ability to synchronise power infrastructure, site delivery, and IT deployment.

This is why AI has turned data-centre development into a systems problem, which you cannot solve by procuring GPUs alone. You need utility relationships, grid capacity, mechanical design that can evolve toward higher-density liquid cooling, resilient supply chains for electrical plant, and a realistic view of how long approvals and energisation actually take. That systems complexity is one reason capital is flowing not just into campuses, but also into substations, onsite generation, cooling innovation, and grid-adjacent energy strategies.

The environmental and social bill is larger than the carbon headline

The environmental debate is often reduced to carbon, but that is far too narrow. Data centres also consume water, occupy land, create local noise and traffic, and can strain community infrastructure if growth is poorly planned. The IEA estimates that a typical 100 MW hyperscale facility in the United States can consume around 2 million litres of water per day when direct and indirect water use are combined, and it projects global data-centre water consumption to rise sharply by 2030. It also estimates indirect CO2 emissions from data centres at around 180 million tonnes today, with emissions still rising in a sector where many others are expected to stabilise or decarbonise more quickly.

Those impacts are social as well as environmental. The World Resources Institute argues that the quality of governance around energy procurement, water use, land siting, community engagement, and cost recovery will determine whether data-centre growth benefits local economies, or shifts risk onto residents. Academic work has made a similar point more bluntly: the local consequences can include water stress, noise, infrastructural burden, and uneven distribution of costs and benefits. One consistent problem is transparency. Several researchers note that disclosures on workload-level energy, water, and supply-chain impacts remain incomplete, which makes honest sustainability accounting harder than it should be.

None of this means data centres are uniquely problematic. It means they should now be treated like major industrial infrastructure, because that is what they are becoming. The question is not whether the world needs more compute. It clearly does. The real question is where that compute should sit, how it should be powered and cooled, and whether the host community sees tangible local value rather than only higher demand on shared systems.

What does the future hold: bigger training clusters, or an explosion of inference?

In the near term, frontier training will continue to favour very large, highly concentrated facilities. The reason is technical as much as economic: training performance depends on tightly coupled networking, extremely high power density, and operational coordination that is easier to achieve on a handful of very large campuses than across a fragmented estate. The power required for individual training runs has already moved into utility-scale territory, and scenario work suggests that later this decade the largest training clusters could become dramatically larger still, though those projections remain uncertain rather than settled forecasts.

But inference is likely to become the bigger long-run story. Training is intensive, but episodic. Inference is repetitive and ubiquitous. A growing body of research argues that inference already accounts for most of the computing effort associated with deployed AI systems, and newer work on large-model inference suggests that energy per query can rise sharply when longer outputs and test-time scaling are used. MIT researchers have made the point clearly: as generative AI becomes embedded in everyday applications, the cumulative energy demand of inference may eventually dominate because every prompt, search, agent step, and automated decision becomes a recurring load.

That does not mean training stops mattering, it means the industry may bifurcate. Training will likely remain concentrated in a relatively small number of very large facilities. Inference, by contrast, could spread across a much broader range of locations and architectures depending on latency requirements, privacy constraints, bandwidth economics, and the physical opportunity to reuse heat.

The edge opportunity: not magic, but potentially very powerful

This is where the edge hypothesis becomes interesting. Suppose a building has a durable 24x7 demand for hot water or low-temperature heat: hotels, student accommodation, hospitals, leisure facilities, mixed-use developments, some office campuses with gyms and catering, or clusters linked by a local heat network. Now suppose a small edge data centre is located there, uses liquid cooling, and captures the heat produced by inference servers. The electricity first performs useful computation; almost all of it then appears as heat, which can be recovered and upgraded where necessary for domestic hot water or space heating. That is not “free energy,” but it is a much better use of the energy already being consumed.

The thermodynamics matter. It would be misleading to call this literally energy neutral. The servers still consume electricity. Pumps, controls, backup systems, and sometimes heat pumps also consume electricity. And raw server waste heat is often too low-grade to meet hot-water requirements directly: guidance for building systems typically requires stored domestic hot water around 60°C, while recovered data-centre heat may arrive at much lower temperatures unless the cooling system is designed for higher-temperature liquid loops. In practice, useful schemes depend on liquid cooling, smart heat-exchanger design, and often some temperature lift.

Still, the opportunity is real. Reviews of data-centre heat recovery and building-integrated edge data centres show that smaller facilities can be designed explicitly around local heat demand. The Greater London Authority’s guidance is especially instructive: with strong design focus and suitable cooling architecture, a very high proportion of IT load can be recovered as useful heat in smaller edge facilities; liquid-to-liquid systems are particularly favourable because they produce higher-temperature heat than conventional air-cooled designs. The same guidance also notes that edge or micro data centres in the 250 kW to 5 MW range can be sited close to heat users, which is critical because transporting low-grade heat over distance quickly becomes uneconomic.

So the user’s premise is directionally right: a distributed network of inference-capable edge facilities embedded in buildings with steady thermal demand could deliver two services from one electrical input stream, digital inference and useful heat. A more precise way to say it is that such sites can materially increase effective energy productivity and reduce the need for separate heating energy.

Why not just keep everything in giant campuses?

Because giant campuses are not optimal for every workload, and they are especially weak when it comes to heat reuse. The problem is not that hyperscale operators are unaware of waste heat. It is that very large campuses are often sited where power, land, and planning are available, not where there is a large, constant nearby heat off-taker. Guidance from London’s heat-reuse work notes that large data centres often sit outside dense urban areas and may struggle to find local customers that can absorb their heat continuously. Heating demand is also seasonal, which makes matching a constant 24x7 heat source to a variable load difficult unless there is thermal storage, industrial demand, or a substantial heat-network backbone.

This is one reason edge facilities deserve more attention. A 1 MW edge site attached to a hotel, residential complex, hospital, leisure centre, or mixed-use development may be far easier to integrate thermally than a 100 MW campus in a remote location. The heat problem is smaller, the offtaker is closer, and the avoided heating load is more visible. In that setting, the compute node becomes part of a building-energy system rather than a standalone electrical load.

The technical case for inference at the edge

The technical argument for edge inference is strongest where latency, bandwidth, resilience, or privacy really matter. Academic surveys of edge AI consistently point to the same advantages: processing data near the point of use can reduce latency, cut backhaul bandwidth, improve privacy, and support services that cannot rely on perfect connectivity. This is particularly relevant for real-time industrial control, robotics, autonomous systems, on-premise copilots, surveillance analytics, healthcare workflows, and interactive AI applications where response speed affects user experience or safety.

And yes, inference can be latency sensitive. Not every workload is, but many commercially important ones are. A user-facing assistant, real-time translation system, visual inspection pipeline, fraud screen, robotics controller, or AI-enabled customer interaction often wins or loses on responsiveness. MIT reporting on AI energy use also highlights the commercial pressure to make generative systems respond immediately, which reinforces the case for keeping some inference geographically and topologically closer to demand.

There are limits, of course. Large models remain memory-hungry and power-dense. Research on running LLMs on edge platforms shows why this is difficult: even modest models can require substantial memory and energy, which is why optimisation, quantisation, and hardware-software co-design matter so much. The future therefore is unlikely to be “all edge” or “all hyperscale.” It will be hybrid. Smaller models, distilled models, retrieval-heavy workflows, and latency-critical inference may move outward. Frontier training and the largest shared inference pools will stay centralised where utilisation and infrastructure economics are strongest.

The environmental and social upside of distributed, heat-reusing edge sites

If done well, this architecture could produce real benefits. First, it can reduce wasted heat by turning an unavoidable by-product of computation into a useful thermal output. Second, it can lower the need for separate electric or fossil-fuel water heating in the host building. Third, because smaller edge facilities can sometimes connect at lower power levels than hyperscale campuses, they may avoid some of the most disruptive grid-reinforcement requirements associated with very large concentrated loads, even though local upgrades may still be needed. Fourth, they can make the local value proposition more tangible: the community does not just host compute; it receives heat, resilience, and possibly lower building energy costs.

There is also a planning benefit. Instead of treating digital growth and decarbonisation as competing agendas, edge heat-reuse schemes let them reinforce each other. AI demand would still grow, but more of that growth could be absorbed into existing buildings and district-energy infrastructure rather than requiring another giant campus, another very large interconnection, and another difficult conversation about who carries the cost.

The catch: this only works when the use case is disciplined

The risk is overgeneralisation. Not every office block has the right thermal profile. Not every inference workload needs ultra-low latency. Not every building has the plant-room space, hydraulic layout, electrical capacity, operational sophistication, or tenancy model to host an edge data centre. Heat reuse also works best when demand is durable and coincident. If the building’s hot-water demand collapses overnight or seasonally, the economics weaken unless there is storage or a shared network of off-takers. Even optimistic guidance assumes backup plant, careful design, and a credible operating model.

So the right conclusion is not that edge inference will replace hyperscale, it will not. The better conclusion is that AI is creating room for a new layer of infrastructure between the device and the hyperscale campus: compact, liquid-cooled, heat-aware edge facilities designed around specific inference workloads and specific local energy systems. In the right locations, those sites could reduce latency, improve privacy, cut bandwidth, create value from waste heat, and ease pressure on the grid compared with concentrating every new AI workload into ever larger campuses.

What the future probably looks like

The future is not one architecture winning, it is stratification.

Frontier training will continue to pull toward a small number of very large, power-hungry campuses. High-volume shared inference will often stay there too, especially where utilisation, model size, and operational simplicity dominate. But as AI becomes embedded into buildings, enterprises, cities, and real-time systems, a second trend should accelerate alongside it: inference moving outward to edge nodes where latency matters, privacy matters, bandwidth matters, and heat can be captured rather than wasted.

That is the deeper point. The AI boom is not only about building more data centres. It is about deciding which data centres to build, where to put them, and how intelligently to connect digital infrastructure with the physical energy systems around it. The most valuable AI infrastructure of the next decade may not be the facility that consumes the most power, it may be the one that makes each kilowatt do the most useful work.