Building the next generation of AI data centres: speed, scale and social licence at 100MW+

The AI infrastructure market is moving out of the experimental phase and into industrial scale. For rapidly growing neocloud providers, that shift is redefining what “data-centre delivery” actually means.

This is no longer about fitting incremental compute into traditional colocation footprints. It is about designing, building and operating 100MW+ campuses capable of supporting rack densities above 150kW, with deployment timelines that match the speed of AI demand. At that level, conventional assumptions start to fail. Air cooling stops being viable at meaningful scale. Power availability becomes the gating factor. Grid connection timelines begin to shape commercial strategy. And the ability to operate efficiently, credibly, and responsibly becomes just as important as the ability to build quickly.

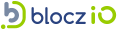

The market opportunity is obvious. Public cloud growth continues to accelerate, while AI infrastructure demand is creating an even steeper curve in capacity requirements. But growth at this level creates a new set of constraints. The challenge is no longer simply securing capital or procuring GPUs. The harder question is how to deliver large-scale, high-density infrastructure fast enough, with enough certainty, and with a model that can withstand scrutiny from investors, customers, utilities, regulators and local communities.

The real constraint is delivery at industrial scale

For neocloud businesses, the problem is not theoretical demand. It is the physical reality of enabling it.

A 100MW+ AI campus is now a major energy and infrastructure project. It requires land with a credible power path, resilient water strategy, robust fibre connectivity, and a supply chain capable of delivering long-lead electrical infrastructure on time. In many markets, the critical bottlenecks are already well understood: limited utility capacity, substation reinforcement delays, shortages of transformers and switchgear, constrained land near established connectivity hubs, and planning environments that were not designed for this pace or scale of development.

As densities rise above 150kW per rack, complexity increases again. These environments cannot be treated as scaled-up versions of legacy enterprise or colocation facilities. Thermal design, hydraulic design, plant resilience, maintainability and operational choreography all become mission-critical. At this density, poor coordination between design, construction and operations is not an inconvenience, it is a major delivery risk!

This is why the next generation of neocloud providers needs more than contractors. They need partners who understand the full infrastructure stack: power strategy, campus planning, liquid cooling integration, programme delivery, commissioning, operational readiness and long-term optimisation.

Liquid cooling is now a necessity, not a differentiator

At densities exceeding 150kW per rack, liquid cooling is no longer a premium option. It is the enabling technology.

AI workloads are driving heat densities far beyond what traditional air architectures can handle efficiently or economically. Liquid cooling unlocks higher compute density, better thermal control, lower fan energy, and more effective support for the next generation of accelerated compute.

But liquid cooling also carries a much broader message.

It signals that the operator is not trying to force tomorrow’s workloads into yesterday’s infrastructure model. It shows a willingness to adopt a more efficient, more deliberate and more future-ready approach to compute deployment. Done properly, liquid cooling becomes part of the social narrative, more than just a solution to a problem. It demonstrates that higher-density AI infrastructure does not have to mean proportionally higher environmental impact. It can mean better energy efficiency, more controlled thermal performance, better use of power, and a more credible route to heat recovery and resource stewardship.

In that sense, liquid cooling is not just a technical solution. It is evidence of infrastructure maturity.

The winners will be those who compress time to market without compromising operability

For neoclouds, speed matters. Revenue depends on bringing capacity online fast. Customers want committed delivery dates. GPU roadmaps do not wait for substation upgrades. Every delay in energisation, fit-out or commissioning has a direct commercial impact.

But the wrong kind of speed is dangerous.

Trying to accelerate 100MW+ developments without the right delivery model simply shifts risk downstream, where it becomes far more expensive. Poorly coordinated design packages, late-stage utility surprises, fragmented procurement, immature cooling strategies, incomplete commissioning plans and underdeveloped operating models all create hidden drag. They may preserve the appearance of speed in early project phases, but they usually surface later as delays, rework, instability or underperformance.

The smarter route is to reduce time to market through certainty, not through shortcuts.

That means selecting sites based on realistic power delivery pathways, not just headline availability. It means engaging utilities, planners and critical equipment suppliers early enough to shape the programme rather than react to it. It means standardising what can be standardised across electrical, mechanical and liquid-cooling infrastructure. It means using modular and prefabricated approaches where they genuinely de-risk delivery. And it means designing the operating model in parallel with the asset, so that handover is not the point at which problems first become visible.

For neoclouds scaling aggressively, this is where the right delivery partner adds disproportionate value: not simply by building the asset, but by helping collapse the gap between concept, energisation, operational readiness and commercial service.

Power strategy now sits at the centre of business strategy

At 100MW+, power is not a utility issue, it is a board-level issue.

The most competitive AI infrastructure businesses will increasingly be those that treat energy strategy as a core part of market entry and customer growth. That includes grid access, yes, but also load flexibility, on-site resilience, energy procurement, phasing strategy, and the ability to align new demand with cleaner supply over time.

There is a temptation in fast-growth markets to think only in terms of connection size: how much power can be secured, and how quickly. But the more important question is how intelligently that power can be used.

High-density AI facilities should be designed around energy productivity. That means making sure every megawatt translates into useful compute, not unnecessary thermal losses, stranded redundancy or inefficient operating practices. It means tighter plant integration, better controls, higher system visibility and continuous optimisation once the campus is live. And it means ensuring that liquid cooling, electrical architecture and software orchestration are treated as connected parts of a single system.

The opportunity is huge. The neocloud providers who can demonstrate better compute-per-megawatt outcomes will not just reduce cost, they will strengthen their credibility with customers, utilities and capital providers alike.

Social licence is becoming a competitive advantage

There is also a broader issue that the sector cannot afford to ignore.

Large AI data centres increasingly attract public scrutiny because they concentrate power demand, land use, and infrastructure investment into single sites at unprecedented scale. If communities see these developments as extractive, opaque or environmentally indifferent, resistance will grow. Planning will get harder. Delivery risk will increase. Reputational cost will soon follow.

This is why social impact can no longer be treated as a communications exercise after the engineering decisions have been made.

For the next generation of high-density campuses, social licence has to be designed in from the start. That means clear thinking about land use, water stewardship, visual impact, local infrastructure effects, employment, skills, and the long-term value the development brings to the surrounding area. It also means being able to explain, in credible terms, how technologies such as liquid cooling improve resource efficiency and reduce avoidable waste.

This is where the “social message” of liquid cooling becomes especially powerful. It helps tell a story that communities and stakeholders can understand: that AI infrastructure is being built with greater thermal efficiency, more intentional resource use, and a more modern approach to sustainability than the legacy data-centre model. It does not solve every concern on its own, but it is a tangible sign that the industry is evolving in the right direction.

For operators competing for capital, customers, and access to new markets, that matters. The ability to show that growth is being delivered responsibly is becoming commercially valuable.

What should the industry be doing now?

For neocloud companies planning 100MW+ deployments, several priorities are becoming clear.

First, choose partners with integrated capability. At these densities and scales, the handoffs between strategy, design, construction, commissioning and operations create too much risk if they are not tightly aligned. The partner ecosystem has to understand the whole lifecycle.

Second, design for liquid cooling from the outset. Retrofitted thinking will not work. Hydraulic resilience, heat rejection strategy, maintainability, controls and white-space configuration all need to be embedded from day one.

Third, bring operations into the project early. The ultra-high density facilities that succeed are not just the ones that can be built. They are the ones that can be run safely, reliably, and efficiently at sustained high load.

Fourth, prioritise power certainty over paper capacity. A plausible, phased path to energisation is worth more than an aggressive headline that cannot be delivered in practice.

Fifth, treat sustainability and community impact as delivery enablers. Better energy use, smarter cooling, stronger water strategies and more transparent engagement do not just reduce environmental impact. They reduce friction.

A new infrastructure model for AI growth

The next wave of AI infrastructure will not be won by companies that simply demand more capacity. It will be won by those that can deliver dense, power-hungry, liquid-cooled campuses faster, more predictably and more responsibly than the rest of the market.

That requires a different mindset. At 100MW+ and 150kW+ per rack, data-centre delivery becomes a discipline of industrial integration. Power, land, cooling, construction, commissioning, operations and community impact all have to work together. There is no room for fragmented delivery models or legacy assumptions.

For high-growth neoclouds, the strategic question is therefore straightforward: who can help us scale at the pace AI demands, while preserving efficiency, resilience, credibility and social licence?

That is the real challenge now. It is also the opportunity.