The AI Infrastructure Race Has Moved Down the Stack: From GPUs to Gigawatts

For the last two years, “AI infrastructure” has been shorthand for GPUs. Whoever could secure GPUs could train bigger models, serve more tokens, and ship faster. That mental model is now incomplete.

What we’re seeing in 2026 is a shift in the true critical path: compute procurement is becoming more multi-sourced and contractable, while power, land, and electrical gear are turning into the hardest constraints.

Why the hyperscalers are signing “everything” deals

Look at the direction of travel from the largest buyers:

- Meta and NVIDIA announced a multiyear partnership spanning CPUs, networking, and “millions” of Blackwell and Rubin GPUs, plus Spectrum‑X Ethernet and Grace CPU deployments.

- Meta and AMD announced a long-term agreement for up to 6 gigawatts of AMD Instinct GPU deployments, with initial shipments beginning in the second half of 2026.

- Meta has guided 2026 capex of $115B–$135B (including finance lease principal payments), with growth driven by infrastructure investment for its AI efforts.

This pattern of large, forward-looking commitments across silicon generations and multiple vendors, tells you two things:

- Nobody wants a single choke point. Vendor concentration risk is real when roadmaps, packaging capacity, HBM supply, and networking all have their own constraints.

- The biggest players are buying optionality. They’re locking in supply and negotiating room to adapt as inference/training mix shifts, architectures evolve, and efficiency curves move.

But the most important takeaway isn’t “Meta bought a lot of GPUs.” It’s this:

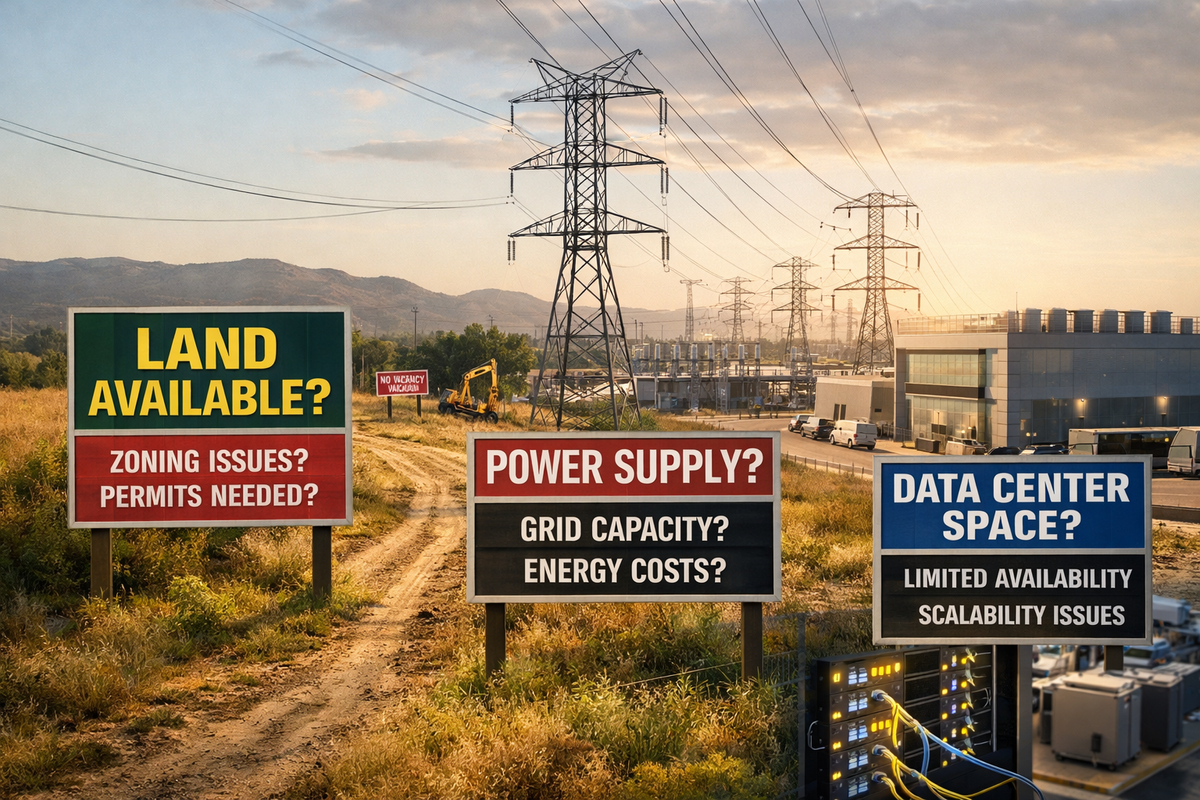

At the frontier, GPUs are no longer the only gating item. The gating items are increasingly physical: megawatts, substations, transformers, cooling loops, permitting, and land.

The physics are catching up with the hype

Modern AI systems are brutally power-dense. To make this tangible:

- NVIDIA’s DGX GB200 documentation pegs rack power at ~120 kW.

- Industry analyses increasingly talk about AI deployments pushing rack densities into the hundreds of kW and beyond. For example, JLL has discussed rack densities ranging roughly 130 kW to 250 kW for next-gen AI infrastructure.

- Liquid cooling becoming the norm for high-density deployments, ultimately increasing density far beyond where it is today.

Now do the data centre maths that procurement teams often skip:

- 100 racks at 120 kW ≈ 12 MW of IT load

- With a respectable PUE of 1.2, facility draw becomes ~14–15 MW

- Scale that to multiple halls, then a campus, and you’re suddenly negotiating like a heavy industrial operator, not “just” an IT buyer.

In my experience, the example above is not unusual, in fact it is increasingly regarded as "small". At the time of writing, all the deals I am personally working on are many orders of magnitude larger than this. This is why the conversation is drifting from “How many GPUs did you get?” to “Where are you going to run them?”

The new critical path: interconnection plus electrical equipment

Even if you can buy GPUs, you still need a place to plug them in. And the grid side is not moving at software speed:

- Reporting has highlighted long interconnection timelines and the broader frictions, grid limits, regulatory reviews, community opposition, and supply constraints for data centre buildouts.

- Transformer lead times have stretched dramatically in many markets; industry commentary and research frequently points to multi-year waits for large units. For example, Eurelectric cites transformer lead times rising to around 2.5 years on average and up to ~4 years for large units (referencing its “Grids for Speed” work).

- Trade and industry coverage has also tracked elevated transformer lead times in the 100+ week range in recent years.

So even if your building is ready, you can end up in a bizarre limbo: a finished facility waiting on the powertrain (transformers, switchgear, utility substation work, transmission upgrades).

In other words, electrical hardware has become a first-class dependency.

Land and permitting: the other half of “capacity”

Power is only one side of the constraint. You also need:

- Enough land for phased expansion, setbacks, cooling plant, substations, and fibre routes

- Permitting certainty, which depends on local politics and community alignment

- Water and heat rejection strategy, which is increasingly a public conversation

Meta’s public data-centre messaging is revealing here. It has described breaking ground on its 30th data centre (Beaver Dam, Wisconsin), and explicitly talks about underwriting energy infrastructure investments (substations, transmission lines) as part of these builds.

And on the extreme end, TechCrunch reported Meta’s plans for Hyperion in Louisiana, ultimately targeting gigawatt-scale capacity and scaling ambitions up to 5 GW over time.

Whether you’re Meta-sized or not, the lesson holds: compute strategy is now inseparable from real-estate strategy and grid strategy.

The “AI factory” playbook for 2026: what serious builders do differently

If you’re planning meaningful AI capacity for the rest of 2026 (and beyond), treat this like building a production plant. The winners behave less like IT buyers and more like infrastructure developers.

1) Start with a power thesis, not a GPU SKU

Before you pick a chip generation, answer:

- What’s your target MW envelope in 12, 24, 36 months?

- Do you need firm capacity (always-on) or can you be a flexible load (curtailment, demand response)?

- What’s your acceptable timeline for interconnect, and what’s your Plan B?

This flips procurement from “GPU first” to power-first site selection.

2) Secure the powertrain early (transformers, switchgear, UPS)

Assume long lead times and design accordingly:

- Place orders for large electrical gear early in the program

- Standardize where possible (repeatable electrical one-lines)

- Don’t underestimate commissioning complexity at high densities

If transformer delivery is 24–48 months in your region, your “GPU delivery date” is almost irrelevant.

3) Design for density and operability

High-density AI clusters aren’t just “more servers”:

- Cooling shifts toward direct liquid cooling and engineered coolant distribution

- Failure domains matter more (a single busway mistake can take out a staggering amount of compute)

- Networking becomes a power and airflow story (cabling, optics, switch placement, thermal zones)

The operational difference between “we have racks” and “we have a stable AI factory” is usually in these engineering decisions.

4) Treat permitting/community as a core workstream

Delays are often political and social, not technical:

- Water usage, noise, generator emissions, and grid impacts are flashpoints

- Transparency and community benefits (jobs, infrastructure upgrades) can materially change timelines

Recent reporting shows the growing friction around U.S. data centre expansion, including delays and cancellations tied to power availability and local opposition.

5) Keep silicon flexible, but lock down delivery capacity

Multi-vendor strategies are becoming standard for large buyers because they:

- Reduce roadmap risk

- Increase negotiating leverage

- Allow optimization by workload (training vs inference vs embedding/search)

Meta explicitly frames its AMD deal as part of a “portfolio-based approach” to infrastructure diversity.

What this means for enterprises (not just hyperscalers)

Most enterprises can’t (and shouldn’t) imitate hyperscalers dollar-for-dollar. But you can copy the sequencing:

- Identify sites and power pathways first (utility interconnect, colocation capacity, or behind-the-meter options).

- Pre-qualify electrical and cooling designs for the densities your roadmap implies.

- Lock capacity contracts (data hall or campus capacity and power) early, then finalize GPU procurement against a real deployment envelope.

The teams moving fastest in 2026 aren’t the ones who found a way to “buy GPUs”, they’re the ones who have a rock-solid answer for where the megawatts come from, when they arrive, and what it takes to keep the plant stable at 24/7 utilization.

Key takeaway: Ask yourself one simple question

If someone dropped your entire 2026 GPU allocation on the dock tomorrow, could you:

- commission the electrical gear,

- bring up the cooling loop,

- complete interconnect,

- and run at target density

And all within the quarter?

If not, you don’t have an GPU problem. You have an infrastructure critical path problem.