The Inference Boom: Why Enterprise AI Will Be Won at the Workload Layer

For the last two years, AI scaling has been framed as a race for chips, then a race for power. Both are still true, but there is another race emerging, one where the ability to service real world workloads will be key to success.

The next phase of enterprise AI will not be decided only by who has the largest training cluster, the biggest campus, or the most aggressive capital expenditure plan. It will be decided by who can run inference in the right place, at the right cost, with the right latency, security, energy profile and operating model.

That is a very different problem to the ones we face today, and it's why those who will be successful are already positioning themselves for the future.

Training is about creating intelligence, inference is about applying it. Training gets the headlines because it is dramatic, expensive and visibly constrained by the largest GPU clusters. But enterprise value is realised when models are used inside live workflows, customer operations, industrial systems, software environments, energy networks, regulated processes and decision loops.

That is where the market is now moving.

The hyperscalers are still spending at extraordinary levels. Reuters reported that the combined AI outlays of Alphabet, Amazon, Microsoft and Meta are now expected to surpass $700 billion this year. The same report noted that investors are increasingly distinguishing between companies that can translate that spending into clear revenue growth and those where the payoff remains less obvious. Google Cloud’s 63 per cent revenue surge stood out because it gave the market a clearer link between AI infrastructure and enterprise adoption.

That is the real signal. AI capacity is no longer enough, the market is starting to ask what that capacity is for.

The shift from training scarcity to inference economics

The first phase of the AI infrastructure race was defined by scarcity. Access to GPUs mattered because it determined who could train frontier models, fine tune specialist systems and serve fast growing demand.

That has not gone away. The largest training workloads will remain highly concentrated because they depend on huge volumes of power, cooling, land, fibre, memory bandwidth and capital. But once AI moves from experimentation into production, the question changes.

It becomes less about whether a model exists, and more about whether it can be used reliably and economically at scale.

This is where inference becomes the centre of gravity. Every enterprise AI application creates inference demand. A customer support assistant generates inference. A software engineering agent generates inference. A grid optimisation tool generates inference. A visual inspection system in a factory generates inference. A private enterprise copilot grounded in internal systems generates inference. Everything you can think of generates inference, that's the key point!

The difference is that these workloads do not all behave in the same way.

Some can tolerate delay, some cannot. Some can run in centralised cloud regions. Some need to sit closer to the data. Some need strict sovereignty controls. Some are occasional. Others become continuous operational systems. Some are generic productivity tools. Others interact with physical infrastructure where latency, resilience and trust are not theoretical concerns.

That is why the next infrastructure advantage will be workload led.

Agents make this problem much harder

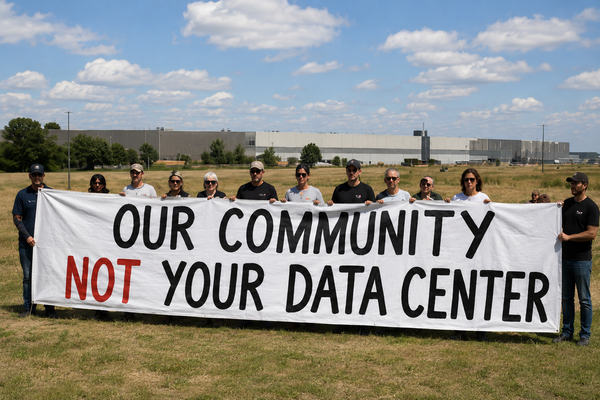

The rise of agentic AI accelerates this shift. A basic chatbot is relatively simple from an infrastructure point of view. It receives a prompt, generates a response, and the interaction ends. Agents are different.

A production agent may plan, call tools, search documents, query systems, write code, check outputs, ask for approval, repeat steps and maintain context across a workflow. That turns inference from a single interaction into a chain of actions. It also increases the importance of cost, governance, latency and control.

Gartner says only 17 per cent of organisations have deployed AI agents to date, but more than 60 per cent expect to do so within the next two years. Gartner also says governance, security and cost management are already emerging as critical concerns around agentic AI.

That matters because most enterprises are not going to deploy one agent. They are going to deploy many. Some will sit inside enterprise applications. Some will support software teams. Some will assist field engineers, analysts, operators and service teams. Some will interact with sensitive data and operational systems.

The moment that happens, inference stops being a background cloud service and becomes an operating cost that has to be actively managed.

That is already visible in FinOps. The FinOps Foundation’s 2026 report says 98 per cent of respondents now manage AI spend, up from 31 per cent two years ago. It also says AI cost management is the number one skillset teams need to develop.

This is the commercial reality behind the hype. Enterprises do not just need access to models, they need control over the unit economics of using them.

Cost per token is only the beginning

The industry often talks about inference in terms of cost per token. That is useful, but it is not enough.

The more important enterprise metric is cost per useful outcome.

A cheap model that takes too many steps, fails too often, or creates too much human rework may be expensive in practice. A more capable model that completes a task with fewer retries may be cheaper at the workflow level. A centrally hosted model may look efficient on paper, but if it adds latency, data movement, governance complexity or resilience risk, it may not be the right architecture for the workload.

This is why the workload layer matters.

The question is not simply which model is cheapest. The question is which combination of model, infrastructure, data location, latency profile, energy source and operating model produces the best outcome for that specific task.

That is also why falling inference costs do not reduce the importance of infrastructure, they increase it.

Stanford’s 2025 AI Index found that the inference cost for a system performing at the level of GPT 3.5 fell by more than 280 fold between November 2022 and October 2024. It also found that hardware costs declined by 30 per cent annually, while energy efficiency improved by 40 per cent each year.

That sounds like inference should become trivial. In reality, lower cost usually expands demand. More use cases become viable. More agents are deployed. More workflows become automated. More context is used. More decisions are supported by models.

Efficiency improves, but usage expands faster.

That is why the inference boom is not a small extension of the training boom, it is a different phase of the market.

The architecture becomes stratified

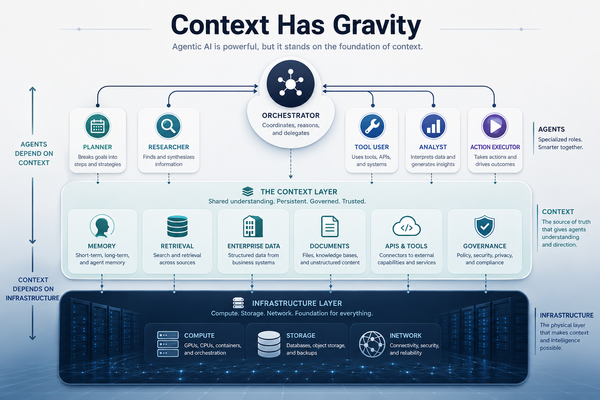

The future is not hyperscale or edge, it is both, plus several layers in between.

One layer will remain concentrated around frontier training and large shared inference pools. These environments need massive campuses, deep capital, advanced cooling, specialised supply chains and credible long term power strategies.

A second layer will be regional. This is where inference can be placed closer to demand, closer to enterprise data, closer to sovereign requirements and closer to specific commercial markets. These sites may not match the largest campuses in scale, but they can be extremely valuable if they sit in the right energy, connectivity and customer environment.

A third layer will sit closer to the physical world. That includes industrial sites, transport corridors, energy networks, public safety environments, healthcare settings, regulated enterprise campuses and other places where the cost of distance becomes operationally real.

Not every workload belongs at the edge. That point is important. Frontier training will not move outwards. Most generic, non latency sensitive enterprise inference will continue to sit in central pools. The mistake is not in believing edge will matter. The mistake is assuming every AI workload should move there.

The winning model is more disciplined. Start with the workload. Understand the decision loop. Understand the data boundary. Understand the latency requirement. Understand the resilience requirement. Understand the cost of failure. Then decide where inference should run.

Energy still sets the boundary

This does not move the conversation away from energy, it makes the energy question more specific.

The IEA now projects data centre electricity consumption to roughly double from 485 TWh in 2025 to 950 TWh in 2030, with AI focused data centre electricity consumption tripling over the same period. It also says AI server power density increased 11 times between 2020 and 2025, with a further fourfold increase expected by 2027.

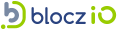

Those numbers matter, but the more important point is location. Power is not constrained in the abstract. It is constrained in specific places, on specific timelines, through specific substations, transformers, grid queues, planning systems and social licence conditions.

That means workload placement and energy strategy are now connected.

A workload that can shift in time may be able to support a more flexible energy model. A workload that needs real time execution may require more resilient local capacity. A regional AI site may be more valuable if it has a credible path to energisation than a larger theoretical campus stuck behind years of grid reinforcement. An edge deployment may only make sense if the operational value is high enough to justify the complexity.

The point is not to push inference everywhere. The point is to place it intelligently.

What serious builders do differently

For infrastructure builders, operators and investors, the implication is clear. The next generation of AI infrastructure cannot be planned only around megawatts, racks and GPUs. Those still matter, but they are not enough.

The stronger question is, what workloads is this infrastructure designed to serve?

That changes the development model. It means site selection must consider not only power and fibre, but also workload demand, customer proximity, data gravity, sovereignty requirements, operating resilience and the ability to support different classes of inference. It means energy strategy must be tied to workload behaviour, not just total load. It means orchestration becomes as important as capacity. It means the commercial model has to account for cost per useful outcome, not just cost per token.

This is also where many projects will fail. Some will overbuild for generic demand that does not arrive. Some will push edge too early into workloads that still belong in central pools. Some will treat inference as a simple extension of cloud capacity, rather than a new operating layer with different economics. Some will ignore the fact that enterprises increasingly need governance, auditability, policy control and predictable cost before they can deploy AI deeply into core workflows.

The winners will be more precise.

They will start with the workload. They will understand where value is created. They will build infrastructure around the operational reality of the task. They will know when to centralise, when to regionalise and when to move closer to the edge.

The real opportunity

The first phase of AI infrastructure was about securing GPUs. The second was about securing power. The next phase will be about deciding where intelligence should run.

That is why inference matters so much.

Inference is where AI meets the enterprise. It is where models become workflows, where experimentation becomes operating cost, where cloud strategy becomes workload strategy, and where infrastructure has to prove its value every day.

The largest campuses will still matter. The biggest hyperscalers will still shape the market. But the next advantage will not come from scale alone. It will come from the ability to place, power, govern and optimise inference across a stratified infrastructure system.

The real opportunity is not to choose between central AI infrastructure and distributed AI infrastructure, it is to connect them into a coherent system. That is the workload layer.

The companies that win will not simply own the biggest AI factories. They will operate the smartest AI workload networks.

And in that market, the workload layer becomes the real battleground.